To understand the current AI landscape, it is essential to distinguish between the AI application and the AI model. Most users interact with “doors” like ChatGPT, Microsoft Copilot, or the Gemini app; however, these are merely the interfaces. Behind each door is a giant AI brain—the model—doing the heavy lifting. These models are not databases of memorized facts; rather, they are trained on massive amounts of text, code, and websites to recognize patterns in language.

At their core, these models function by predicting the next word (or “token”) in a sequence. While it may look like the AI is “thinking,” it is actually a sophisticated mathematical function that assigns a probability to every possible next word based on the text provided. This prediction process is driven by parameters (or weights)—continuous values that determine the model’s behavior. Modern Large Language Models (LLMs) contain hundreds of billions of these parameters (depending on architecture), which are refined through a process called backpropagation, where the model’s guesses are compared against trillions of real-world examples (tokens) until its predictions become uncannily fluent.

The Architecture of Large Language Models (LLMs)

The “Large” in LLM refers to both the parameter count and the staggering scale of training data. For example, it would take a human over 2,600 years of non-stop reading to consume the data used to train GPT-3. The computational power required for the largest models is equally immense, involving operations that would take a standard computer over 100 million years to perform if not for specialized GPU chips that run calculations in parallel.

A pivotal moment in LLM history was the 2017 invention of the Transformer architecture. Unlike older models that read text word-by-word, Transformers process text in parallel using attention mechanisms, allowing them to understand relationships between words more efficiently than older sequential models. They use an “attention” mechanism that allows the model to refine the meaning of a word based on its context. For instance, it can mathematically distinguish if the word “bank” refers to a financial institution or the side of a river by looking at the surrounding words all at once.

Beyond the initial “pre-training” on the internet, chatbots undergo Reinforcement Learning with Human Feedback (RLHF). In this stage, human feedback is used to rank responses, helping train the model to produce more helpful and safe outputs., further tuning the parameters to ensure the model acts as a helpful assistant rather than just a random text completer.

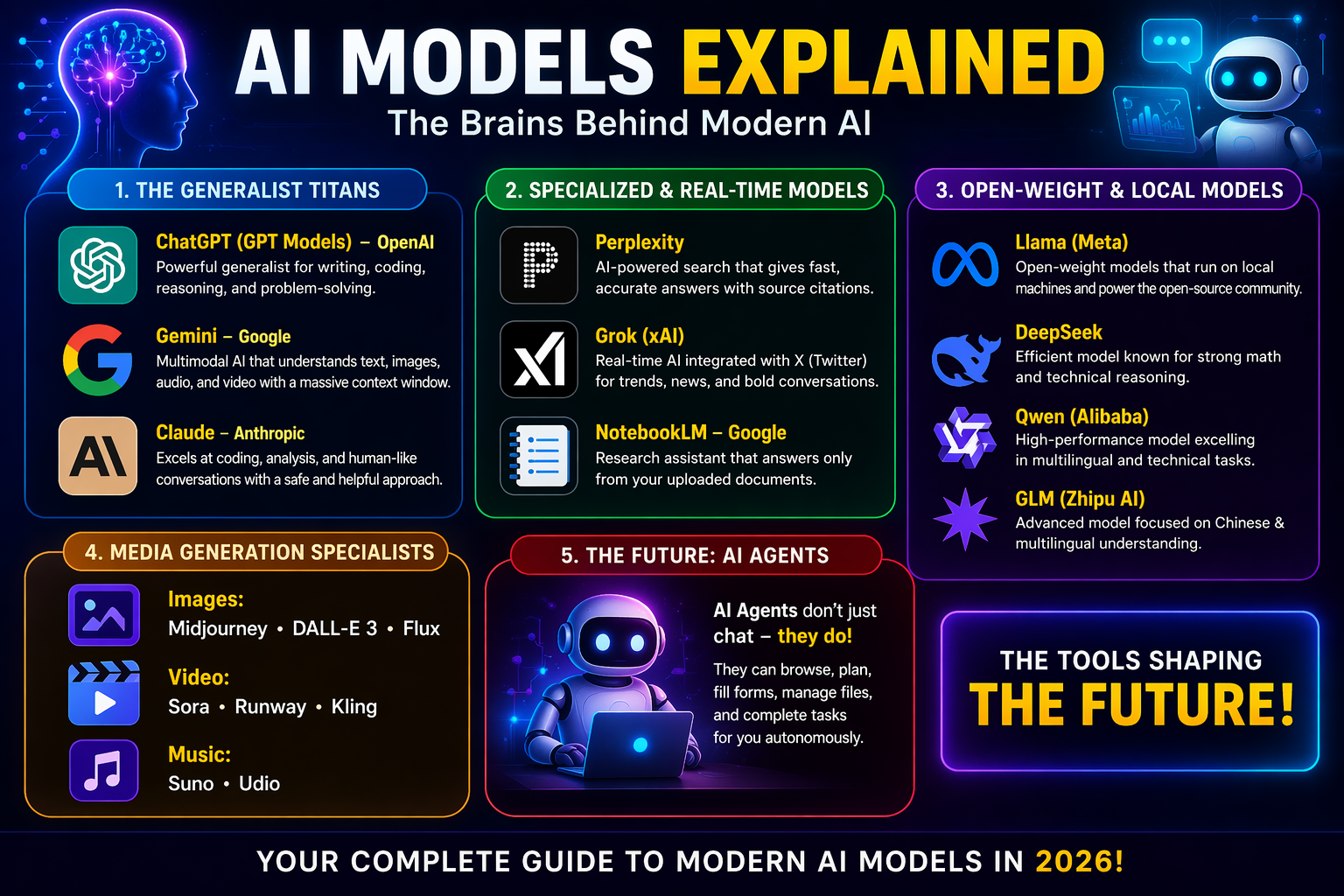

A Comprehensive Guide to Modern AI Models

By 2026, the AI ecosystem has branched into several specialized categories, each with distinct “superpowers.”

1. The Generalist Titans

- GPT (OpenAI): The flagship GPT Model is a well-rounded generalist that handles writing, coding, and image analysis. Its primary strength is obedience; it follows complex, multi-step instructions more reliably than its competitors. OpenAI also features the o1 series, which is specifically designed for deep reasoning and complex math problems.

- Gemini (Google): Known for its massive context window (up to 2 million tokens equivalent to 10 average novels), Gemini can process entire novels or hour-long videos in a single pass. It is natively multimodal, meaning it can “watch” video and “listen” to audio directly rather than converting it to text first. Gemini Pro is deeply integrated into Google Docs and Gmail, while Gemini 3 Flash offers a faster, cheaper alternative for everyday tasks.

- Claude (Anthropic): Often cited as the best for coding and deep analysis, the flagship Claude Opus writes functional code more consistently than others. It is also praised for its human-like tone and its ability to match a specific writing style or voice for polished first drafts.

2. Specialized and Real-Time Models

- Perplexity: This is a “search scalpel” designed for finding accurate facts fast. Unlike general chatbots, it actively searches the live web and typically provides source-backed answers with citations for every claim it makes.

- Grok (xAI): Integrated directly into the X (formerly Twitter) platform, Grok excels at analyzing real-time trends and breaking news. It is known for a more relaxed, conversational tone and its willingness to answer sensitive questions that other models might refuse.

- NotebookLM: A specialized tool designed for high-stakes accuracy. It acts as a “walled garden,” answering questions only from the sources the user provides to minimize the risk of hallucination.

3. Open-Weight and Local Models

These models allow users to run AI on their own hardware, ensuring privacy and no subscription fees.

- DeepSeek: A powerful model focused on math and technical reasoning that can be run at a fraction of the cost of proprietary models.

- Llama (Meta): The foundation for much of the open-source community, available in various sizes to run on everything from gaming PCs to massive data centers.

- Qwen and GLM: High-performance models from Alibaba and Zhipu AI that excel at multilingual tasks and technical benchmarks.

4. Media Generation Specialists

Images:

- Midjourney remains the leader for artistic quality, while DALL-E 3 is the easiest to use within ChatGPT. Flux is the open-source leader for prompt precision.

Video:

- Sora leads in cinematic quality and realistic physics, while Runway 5 offers advanced creative controls like “motion brushes”. Kling is optimized for speed, generating audio and video simultaneously.

Music:

- Suno and Udio can generate full songs with vocals and instruments from a single text prompt, with Udio focusing on licensed training data through partnerships with major music labels.

The Future: AI Agents

The next phase of AI is the shift from “chatting” to “doing.” AI agents (such as OpenAI’s Operator or Google’s Project Mariner) are systems that don’t just give advice; they execute tasks. These agents can navigate your screen, fill out forms, and manage files, effectively acting as an autonomous digital intern to complete multi-step workflows on your behalf.